Exp-lookit-change-detection Class

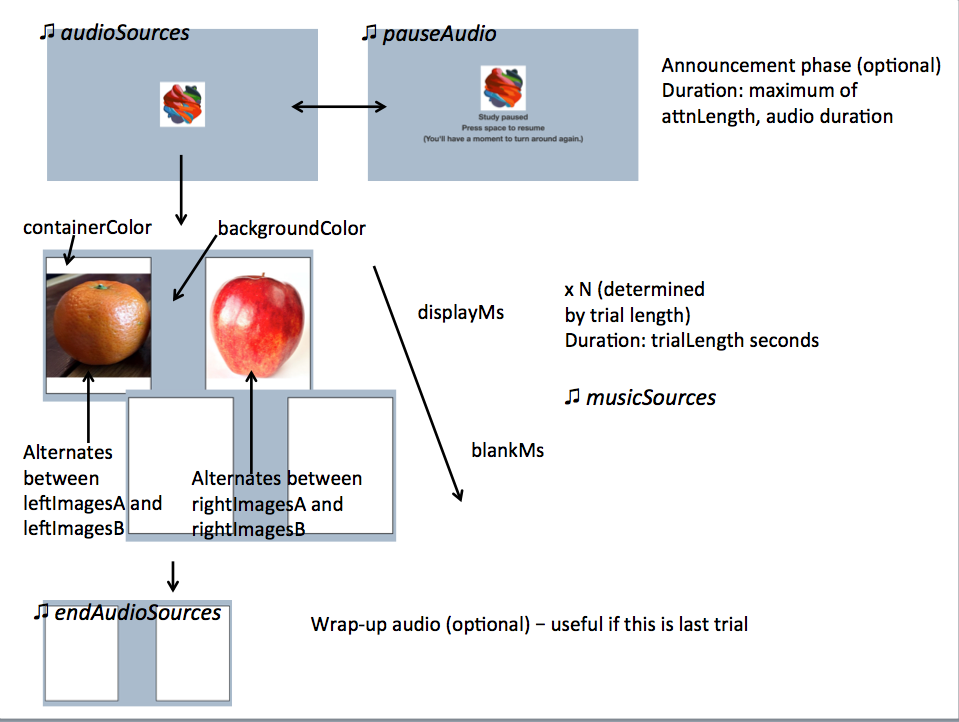

These docs have moved here.Frame for a preferential looking "alternation" or "change detection" paradigm trial, in which separate streams of images are displayed on the left and right of the screen. Typically, on one side images would be alternating between two categories - e.g., images of 8 vs. 16 dots, images of cats vs. dogs - and on the other side the images would all be in the same category.

The frame starts with an optional brief "announcement" segment, where an attention-getter video is displayed and audio is played. During this segment, the trial can be paused and restarted.

If doRecording is true (default), then we wait for recording to begin before the

actual test trial can begin. We also always wait for all images to pre-load, so that

there are no delays in loading images that affect the timing of presentation.

You can customize the appearance of the frame: background color overall, color of the two rectangles that contain the image streams, and border of those rectangles. You can also specify how long to present the images for, how long to clear the screen in between image pairs, and how long the test trial should be altogether.

You provide four lists of images to use in this frame: leftImagesA, leftImagesB,

rightImagesA, and rightImagesB. The left stream will alternate between images in

leftImagesA and leftImagesB. The right stream will alternate between images in

rightImagesA and rightImagesB. They are either presented in random order (default)

within those lists, or can be presented in the exact order listed by setting

randomizeImageOrder to false.

The timing of all image presentations and the specific images presented is recorded in the event data.

This frame is displayed fullscreen; if the frame before it is not, that frame needs to include a manual "next" button so that there's a user interaction event to trigger fullscreen mode. (Browsers don't allow switching to fullscreen without a user event.) If the user leaves fullscreen, that event is recorded, but the trial is not paused.

Specifying media locations:

For any parameters that expect a list of audio/video sources, you can EITHER provide a list of src/type pairs with full paths like this:

[

{

'src': 'http://.../video1.mp4',

'type': 'video/mp4'

},

{

'src': 'http://.../video1.webm',

'type': 'video/webm'

}

]

OR you can provide a single string 'stub', which will be expanded

based on the parameter baseDir and the media types expected - either audioTypes or

videoTypes as appropriate. For example, if you provide the audio source intro

and baseDir is https://mystimuli.org/mystudy/, with audioTypes ['mp3', 'ogg'], then this

will be expanded to:

[

{

src: 'https://mystimuli.org/mystudy/mp3/intro.mp3',

type: 'audio/mp3'

},

{

src: 'https://mystimuli.org/mystudy/ogg/intro.ogg',

type: 'audio/ogg'

}

]

This allows you to simplify your JSON document a bit and also easily switch to a new version of your stimuli without changing every URL. You can mix source objects with full URLs and those using stubs within the same directory. However, any stimuli specified using stubs MUST be organized as expected under baseDir/MEDIATYPE/filename.MEDIATYPE.

Example usage:

"frames": {

"alt-trial": {

"kind": "exp-lookit-change-detection",

"baseDir": "https://www.mit.edu/~kimscott/placeholderstimuli/",

"videoTypes": ["mp4", "webm"],

"audioTypes": ["mp3", "ogg"],

"trialLength": 15,

"attnLength": 2,

"fsAudio": "sample_1",

"unpauseAudio": "return_after_pause",

"pauseAudio": "pause",

"videoSources": "attentiongrabber",

"musicSources": "music_01",

"audioSources": "video_01",

"endAudioSources": "all_done",

"border": "thick solid black",

"leftImagesA": ["apple.jpg", "orange.jpg"],

"rightImagesA": ["square.png", "tall.png", "wide.png"],

"leftImagesB": ["apple.jpg", "orange.jpg"],

"rightImagesB": ["apple.jpg", "orange.jpg"],

"startWithA": true,

"randomizeImageOrder": true,

"displayMs": 500,

"blankMs": 250,

"containerColor": "white",

"backgroundColor": "#abc",

}

}

Item Index

Methods

- destroyRecorder

- destroySessionRecorder

- exitFullscreen

- hideRecorder

- makeTimeEvent

- onRecordingStarted

- onSessionRecordingStarted

- serializeContent

- setupRecorder

- showFullscreen

- showRecorder

- startRecorder

- startSessionRecorder

- stopRecorder

- stopSessionRecorder

- whenPossibleToRecordObserver

- whenPossibleToRecordSessionObserver

Properties

- assetsToExpand

- attnLength

- audioOnly

- audioSources

- audioTypes

- autosave

- backgroundColor

- baseDir

- blankMs

- border

- containerColor

- displayFullscreen

- displayFullscreenOverride

- displayMs

- doRecording

- doUseCamera

- endAudioSources

- endSessionRecording

- fsAudio

- fsButtonID

- fullScreenElementId

- generateProperties

- leftImagesA

- leftImagesB

- maxRecordingLength

- maxUploadSeconds

- musicSources

- parameters

- pauseAudio

- randomizeImageOrder

- recorder

- recorderElement

- recorderReady

- rightImagesA

- rightImagesA

- selectNextFrame

- sessionAudioOnly

- sessionMaxUploadSeconds

- showWaitForRecordingMessage

- showWaitForUploadMessage

- startRecordingAutomatically

- startSessionRecording

- startWithA

- stoppedRecording

- trialLength

- unpauseAudio

- videoId

- videoList

- videoSources

- videoTypes

- waitForRecordingMessage

- waitForRecordingMessageColor

- waitForUploadMessage

- waitForUploadMessageColor

- waitForWebcamImage

- waitForWebcamVideo

Data collected

Methods

destroyRecorder

()

destroySessionRecorder

()

exitFullscreen

()

hideRecorder

()

makeTimeEvent

-

eventName -

[extra]

Create the time event payload for a particular frame / event. This can be overridden to add fields to every event sent by a particular frame

Parameters:

Returns:

Event type, time, and any additional metadata provided

onRecordingStarted

()

private

What to do when individual-frame recording starts.

onSessionRecordingStarted

()

private

What to do when session-level recording starts.

serializeContent

-

eventTimings

Each frame that extends ExpFrameBase will send at least an array eventTimings,

a frame type, and any generateProperties back to the server upon completion.

Individual frames may define additional properties that are sent.

Parameters:

-

eventTimingsArray

Returns:

setupRecorder

-

element

Parameters:

-

elementNodeA DOM node representing where to mount the recorder

Returns:

showFullscreen

()

showRecorder

()

startRecorder

()

Returns:

startSessionRecorder

()

Returns:

stopRecorder

()

Returns:

stopSessionRecorder

()

Returns:

whenPossibleToRecordObserver

()

whenPossibleToRecordSessionObserver

()

Properties

attnLength

Number

minimum amount of time to show attention-getter in seconds. If 0, attention-getter segment is skipped.

Default: 0

audioOnly

Number

Default: 0

audioSources

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for instructions during attention-getter video

audioTypes

String[]

['typeA', 'typeB'] and an audio source

is given as intro, the audio source will be

expanded out to

[

{

src: 'baseDir' + 'typeA/intro.typeA',

type: 'audio/typeA'

},

{

src: 'baseDir' + 'typeB/intro.typeB',

type: 'audio/typeB'

}

]

Default: ['mp3', 'ogg']

autosave

Number

private

Default: 1

backgroundColor

String

Color of background. See https://developer.mozilla.org/en-US/docs/Web/CSS/color_value for acceptable syntax: can use color names ('blue', 'red', 'green', etc.), or rgb hex values (e.g. '#800080' - include the '#')

Default: 'white'

baseDir

String

baseDir + img/. Any audio/video src values provided as

strings rather than objects with src and type will be

expanded out to baseDir/avtype/[stub].avtype, where the potential

avtypes are given by audioTypes and videoTypes.

baseDir should include a trailing slash

(e.g., http://stimuli.org/myexperiment/); if a value is provided that

does not end in a slash, one will be added.

Default: ''

border

String

Format of border to display around alternation streams, if any. See https://developer.mozilla.org/en-US/docs/Web/CSS/border for syntax.

Default: 'thin solid gray'

containerColor

String

Color of image stream container, if different from overall background. Defaults to backgroundColor if one is provided. https://developer.mozilla.org/en-US/docs/Web/CSS/color_value for acceptable syntax: can use color names ('blue', 'red', 'green', etc.), or rgb hex values (e.g. '#800080' - include the '#')

Default: 'white'

displayFullscreenOverride

String

true to display this frame in fullscreen mode, even if the frame type

is not always displayed fullscreen. (For instance, you might use this to keep

a survey between test trials in fullscreen mode.)

Default: false

doUseCamera

Boolean

Default: true

endAudioSources

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for audio after completion of trial (optional; used for last trial "okay to open your eyes now" announcement)

endSessionRecording

Number

Default: false

fsAudio

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for audio played when study is paused due to not being fullscreen

fullScreenElementId

String

private

generateProperties

String

Function to generate additional properties for this frame (like {"kind": "exp-lookit-text"}) at the time the frame is initialized. Allows behavior of study to depend on what has happened so far (e.g., answers on a form or to previous test trials). Must be a valid Javascript function, returning an object, provided as a string.

Arguments that will be provided are: expData, sequence, child, pastSessions, conditions.

expData, sequence, and conditions are the same data as would be found in the session data shown

on the Lookit experimenter interface under 'Individual Responses', except that

they will only contain information up to this point in the study.

expData is an object consisting of frameId: frameData pairs; the data associated

with a particular frame depends on the frame kind.

sequence is an ordered list of frameIds, corresponding to the keys in expData.

conditions is an object representing the data stored by any randomizer frames;

keys are frameIds for randomizer frames and data stored depends on the randomizer

used.

child is an object that has the following properties - use child.get(propertyName)

to access:

additionalInformation: String; additional information field from child formageAtBirth: String; child's gestational age at birth in weeks. Possible values are "24" through "39", "na" (not sure or prefer not to answer), "<24" (under 24 weeks), and "40>" (40 or more weeks).birthday: Date objectgender: "f" (female), "m" (male), "o" (other), or "na" (prefer not to answer)givenName: String, child's given name/nicknameid: String, child UUIDlanguageList: String, space-separated list of languages child is exposed to (2-letter codes)conditionList: String, space-separated list of conditions/characteristics- of child from registration form, as used in criteria expression, e.g. "autism_spectrum_disorder deaf multiple_birth"

pastSessions is a list of previous response objects for this child and this study,

ordered starting from most recent (at index 0 is this session!). Each has properties

(access as pastSessions[i].get(propertyName)):

completed: Boolean, whether they submitted an exit surveycompletedConsentFrame: Boolean, whether they got through at least a consent frameconditions: Object representing any conditions assigned by randomizer framescreatedOn: Date objectexpData: Object consisting of frameId: frameData pairsglobalEventTimings: list of any events stored outside of individual frames - currently just used for attempts to leave the study earlysequence: ordered list of frameIds, corresponding to keys in expDataisPreview: Boolean, whether this is from a preview session (possible in the event this is an experimenter's account)

Example:

function(expData, sequence, child, pastSessions, conditions) {

return {

'blocks':

[

{

'text': 'Name: ' + child.get('givenName')

},

{

'text': 'Frame number: ' + sequence.length

},

{

'text': 'N past sessions: ' + pastSessions.length

}

]

};

}

(This example is split across lines for readability; when added to JSON it would need to be on one line.)

Default: null

leftImagesA

String[]

Set A of images to display on left of screen. Left stream will alternate between

images from set A and from set B. Elements of list can be full URLs or relative

paths starting from baseDir.

leftImagesB

String[]

Set B of images to display on left of screen. Left stream will alternate between

images from set A and from set B. Elements of list can be full URLs or relative

paths starting from baseDir.

maxRecordingLength

Number

Default: 7200

maxUploadSeconds

Number

Default: 5

musicSources

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for music during trial

parameters

Object[]

An object containing values for any parameters (variables) to use in this frame.

Any property VALUES in this frame that match any of the property NAMES in parameters

will be replaced by the corresponding parameter value. For example, suppose your frame

is:

{

'kind': 'FRAME_KIND',

'parameters': {

'FRAME_KIND': 'exp-lookit-text'

}

}

Then the frame kind will be exp-lookit-text. This may be useful if you need

to repeat values for different frame properties, especially if your frame is actually

a randomizer or group. You may use parameters nested within objects (at any depth) or

within lists.

You can also use selectors to randomly sample from or permute

a list defined in parameters. Suppose STIMLIST is defined in

parameters, e.g. a list of potential stimuli. Rather than just using STIMLIST

as a value in your frames, you can also:

- Select the Nth element (0-indexed) of the value of

STIMLIST: (Will cause error ifN >= THELIST.length)

'parameterName': 'STIMLIST#N'

- Select (uniformly) a random element of the value of

STIMLIST:

'parameterName': 'STIMLIST#RAND'

- Set

parameterNameto a random permutation of the value ofSTIMLIST:

'parameterName': 'STIMLIST#PERM'

- Select the next element in a random permutation of the value of

STIMLIST, which is used across all substitutions in this randomizer. This allows you, for instance, to provide a list of possible images in yourparameterSet, and use a different one each frame with the subset/order randomized per participant. If moreSTIMLIST#UNIQparameters than elements ofSTIMLISTare used, we loop back around to the start of the permutation generated for this randomizer.

'parameterName': 'STIMLIST#UNIQ'

Default: {}

pauseAudio

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for audio played upon pausing study

randomizeImageOrder

Boolean

Whether to randomize image presentation order within the lists leftImagesA, leftImagesB, rightImagesA, and rightImagesB. If true (default), the order of presentation is randomized. Each time all the images in one list have been presented, the order is randomized again for the next 'round.' If false, the order of presentation is as written in the list. Once all images are presented, we loop back around to the first image and start again.

Example of randomization: suppose we have defined

leftImagesA: ['apple', 'banana', 'cucumber'],

leftImagesB: ['aardvark', 'bat'],

randomizeImageOrder: true,

startWithA: true

And suppose the timing is such that we end up with 10 images total. Here is a possible sequence of images shown on the left:

['banana', 'aardvark', 'apple', 'bat', 'cucumber', 'bat', 'cucumber', 'aardvark', 'apple', 'bat']

Default: true

recorder

VideoRecorder

private

recorderReady

Boolean

private

rightImagesA

String[]

Set A of images to display on right of screen. Right stream will alternate between

images from set A and from set B. Elements of list can be full URLs or relative

paths starting from baseDir.

rightImagesA

String[]

Set B of images to display on right of screen. Right stream will alternate between

images from set A and from set B. Elements of list can be full URLs or relative

paths starting from baseDir.

selectNextFrame

String

Function to select which frame index to go to when using the 'next' action on this frame. Allows flexible looping / short-circuiting based on what has happened so far in the study (e.g., once the child answers N questions correctly, move on to next segment). Must be a valid Javascript function, returning a number from 0 through frames.length - 1, provided as a string.

Arguments that will be provided are:

frames, frameIndex, expData, sequence, child, pastSessions

frames is an ordered list of frame configurations for this study; each element

is an object corresponding directly to a frame you defined in the

JSON document for this study (but with any randomizer frames resolved into the

particular frames that will be used this time).

frameIndex is the index in frames of the current frame

expData is an object consisting of frameId: frameData pairs; the data associated

with a particular frame depends on the frame kind.

sequence is an ordered list of frameIds, corresponding to the keys in expData.

child is an object that has the following properties - use child.get(propertyName)

to access:

additionalInformation: String; additional information field from child formageAtBirth: String; child's gestational age at birth in weeks. Possible values are "24" through "39", "na" (not sure or prefer not to answer), "<24" (under 24 weeks), and "40>" (40 or more weeks).birthday: timestamp in format "Mon Apr 10 2017 20:00:00 GMT-0400 (Eastern Daylight Time)"gender: "f" (female), "m" (male), "o" (other), or "na" (prefer not to answer)givenName: String, child's given name/nicknameid: String, child UUID

pastSessions is a list of previous response objects for this child and this study,

ordered starting from most recent (at index 0 is this session!). Each has properties

(access as pastSessions[i].get(propertyName)):

completed: Boolean, whether they submitted an exit surveycompletedConsentFrame: Boolean, whether they got through at least a consent frameconditions: Object representing any conditions assigned by randomizer framescreatedOn: timestamp in format "Thu Apr 18 2019 12:33:26 GMT-0400 (Eastern Daylight Time)"expData: Object consisting of frameId: frameData pairsglobalEventTimings: list of any events stored outside of individual frames - currently just used for attempts to leave the study earlysequence: ordered list of frameIds, corresponding to keys in expData

Example that just sends us to the last frame of the study no matter what:

`"function(frames, frameIndex, frameData, expData, sequence, child, pastSessions) {return frames.length - 1;}"``

Default: null

sessionAudioOnly

Number

Default: 0

sessionMaxUploadSeconds

Number

Default: 10

showWaitForRecordingMessage

Boolean

Default: true

showWaitForUploadMessage

Boolean

Default: true

startRecordingAutomatically

Boolean

Default: false

startSessionRecording

Number

Default: false

startWithA

Boolean

Whether to start with the 'A' image list on both left and right. If true, both sides start with their respective A image lists; if false, both lists start with their respective B image lists.

Default: true

stoppedRecording

Boolean

private

trialLength

Number

length of alternation trial in seconds. This refers only to the section of the trial where the alternating image streams are presented - it does not count any announcement phase.

Default: 60

unpauseAudio

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for audio played upon unpausing study

videoId

String

private

videoStream_<experimentId>_<frameId>_<sessionId>_timestampMS_RRR

where RRR are random numeric digits.

videoList

List

private

videoSources

Object[]

Sources Array of {src: 'url', type: 'MIMEtype'} objects for attention-getter video (should be loopable)

videoTypes

String[]

['typeA', 'typeB'] and a video source

is given as intro, the video source will be

expanded out to

[

{

src: 'baseDir' + 'typeA/intro.typeA',

type: 'video/typeA'

},

{

src: 'baseDir' + 'typeB/intro.typeB',

type: 'video/typeB'

}

]

Default: ['mp4', 'webm']

waitForRecordingMessage

Boolean

Default: 'Please wait... <br><br> starting webcam recording'

waitForRecordingMessageColor

Boolean

Default: 'white'

waitForUploadMessage

Boolean

Default: 'Please wait... <br><br> uploading video'

waitForUploadMessageColor

String

Default: 'white'

waitForWebcamImage

String

`baseDir/img/ if this frame otherwise supports use of baseDir`.

Default: ''

waitForWebcamVideo

String

`{'src': 'https://...', 'type': '...'}` objects (e.g. providing both

webm and mp4 versions at specified URLS) or a single string relative to `baseDir/<EXT>/` if this frame otherwise

supports use of `baseDir`.

Default: ''

Data keys collected

These are the fields that will be captured by this frame and sent back to the Lookit server. Each of these fields will correspond to one row of the CSV frame data for a given response - the row will havekey set to the data key name, and value set to the value for this response.

Equivalently, this data will be available in the exp_data field of the response JSON data.

eventTimings

Ordered list of events captured during this frame (oldest to newest). Each event is

represented as an object with at least the properties

{'eventType': EVENTNAME, 'timestamp': TIMESTAMP}.

See Events tab for details of events that might be captured.

frameType

Type of frame: EXIT (exit survey), CONSENT (consent or assent frame), or DEFAULT (anything else)

Events

clearImages

Records each time images are cleared from display

enteredFullscreen

leftFullscreen

nextFrame

Move to next frame

pauseVideo

presentImages

Immediately after making images visible

previousFrame

Move to previous frame

recorderReady

sessionRecorderReady

startIntro

Immediately before starting intro/announcement segment

startSessionRecording

startTestTrial

Immediately before starting test trial segment

stoppingCapture

Just before stopping webcam video capture

stopSessionRecording

unpauseVideo

videoStreamConnection

Event Payload:

-

statusStringstatus of video stream connection, e.g. 'NetConnection.Connect.Success' if successful